Article 1: What “Operational Truth” Actually Looks Like (A Real Cleaning Run)

Preface: Why This Matters

Many discussions about AI, operational governance, and ERPs focus on principles, frameworks, or dashboards. They rarely touch how work actually happens.

At Altomi, we’ve built a system that captures operational truth as work occurs, not reconstructed later. This matters because AI can only be trusted if it learns from reality — not assumptions, estimates, or fragmented systems.

This article demonstrates this principle with a real cleaning run in Multiverse, showing step by step how work is captured end-to-end. Cleaning isn’t just a side task — it’s an operational activity that matters for safety, compliance, cost, and production readiness.

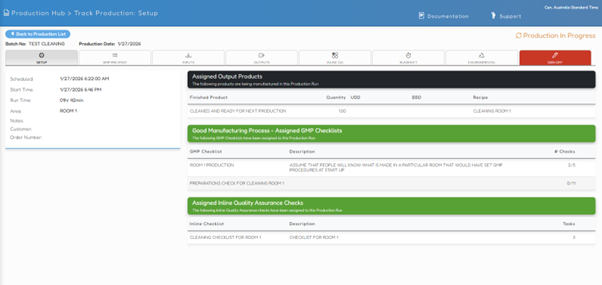

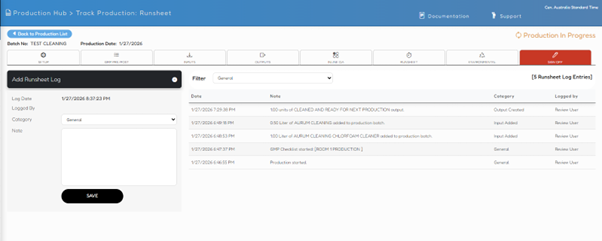

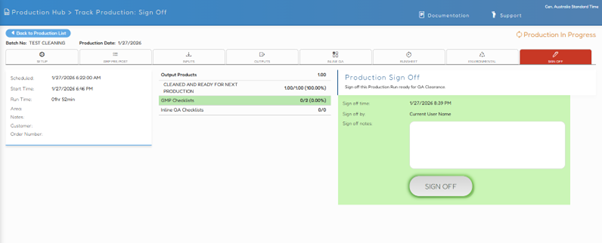

Batch number, date, area, scheduled start

Output: “CLEANED AND READY FOR NEXT PRODUCTION”

Runtime clock tracks actual work

Cleaning is work with a timeline, not metadata or a paper checklist.

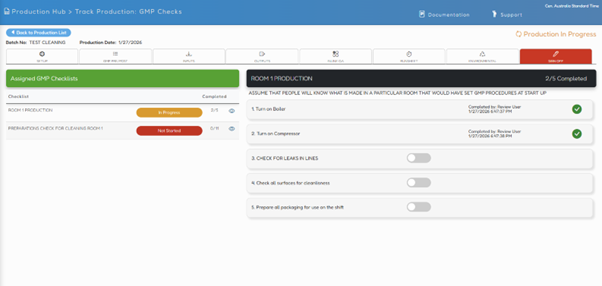

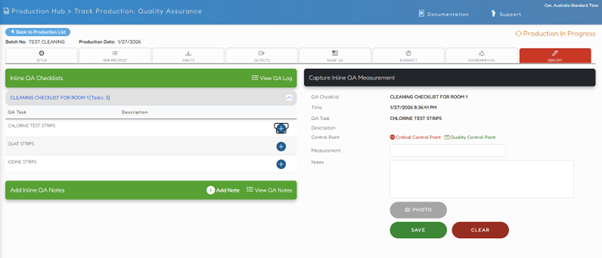

2. GMP Exists at the Moment of Action

Assigned at setup

Executed step-by-step

Timestamped and attributed to a person

Evidence created at the moment of work is truth; evidence created later is just explanation.

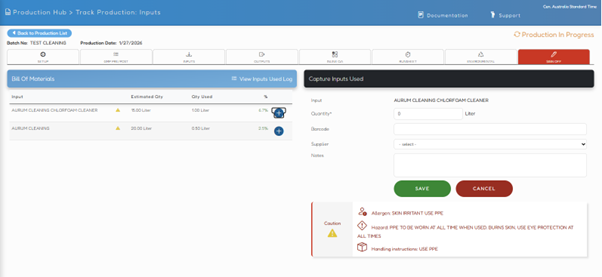

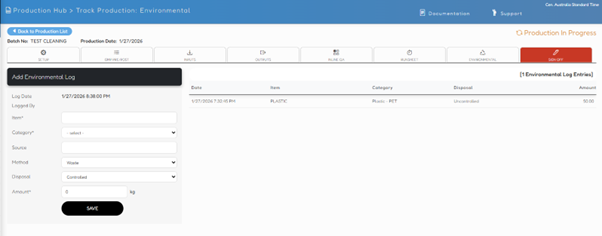

3. Inputs Are Consumed, Not Assumed

Chemicals logged with actual quantities

Safety, handling, and hazard context captured

Variance visible

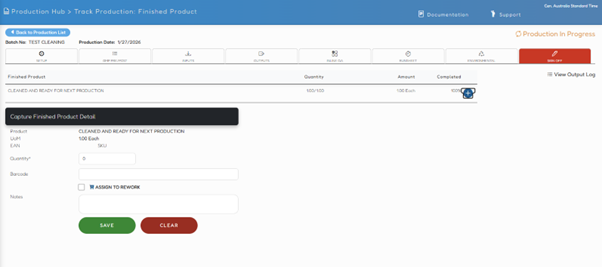

4. Output Is a State, Not a Product

State change:

“Cleaned and ready for next production”

Only true when tasks complete and QC passes

5. Quality Is Measured, Not Declared

Test strips, measurements, control points logged inline

Time and operator attribution preserved

6. A Single, Immutable Timeline

No stitching. No reconciliation. No debate later.

7. Environmental and Sign-Off Are Integral

Evidence exists before sign-off — not retroactively reconstructed.

Why This Matters

This is not cleaning software. This is operational truth: capturing work as it actually occurs.

Most systems:

Prescribe work

Infer work

Optimise work

Very few can operationalise work in reality. That difference is invisible until something goes wrong — then it’s the only thing that matters.

Next article: Why this level of capture is a prerequisite for safe AI, and why systems that generate first and justify later are structurally incapable of governance — no matter how many frameworks sit on top.